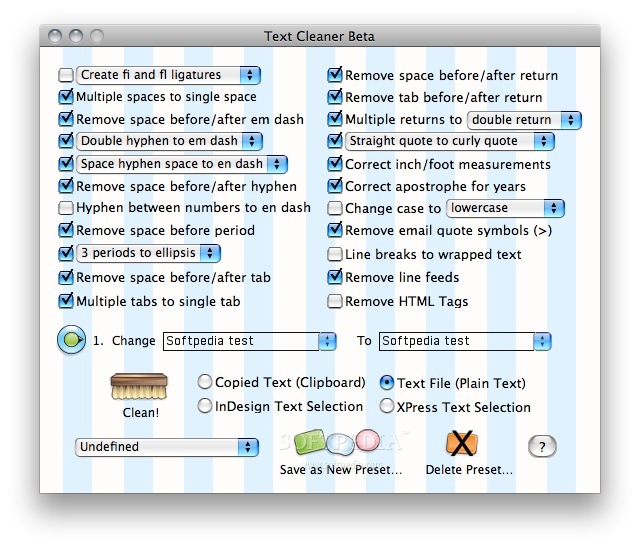

Related Work Generic text cleaning packagesįull-blown NLP libraries with some text cleaningīuilt upon the work by Burton DeWilde for Textacy. If you don't like the output of clean-text, consider adding a test with your specific input and desired output. Pull requests are especially welcomed when they fix bugs or improve the code quality. If you have a question, found a bug or want to propose a new feature, have a look at the issues page. Text processing pipeline for NLP problems with ready-to-use functions and text classification models. Pip install clean-text from cleantext.sklearn import CleanTransformer cleaner = CleanTransformer ( no_punct = False, lower = False ) cleaner. NLP - Text cleaning and processing pipeline. There is also scikit-learn compatible API to use in your pipelines.Īll of the parameters above work here as well. If you need some special handling for your language, feel free to contribute. It should work for the majority of western languages. So far, only English and German are fully supported. For this, take a look at the source code. You may also only use specific functions for cleaning. "you are right ", replace_with_email = "", replace_with_phone_number = "", replace_with_number = "", replace_with_digit = "0", replace_with_currency_symbol = "", lang = "en" # set to 'de' for German special handling )Ĭarefully choose the arguments that fit your task. Into this clean output: A bunch of 'new' references, including (). For instance, turn this corrupted input: A bunch of ‘new’ references, including (). Preprocess your scraped data with clean-text to create a normalized text representation. They have multiple pre-trained embeddings available for download, you can review these in the word2vec module inline documentation.User-generated content on the Web and in social media is often dirty. The library that we’ll be using to lookup pre-trained embedding vectors for our cleaned tokens is gensim. We’ll walk through an example of using gensim however, many of the deep learning frameworks may have ways to quickly load pre-trained embeddings as well. You can think of this as numerically capturing the information and meaning of text in a fixed length numerical vector. In order to do this, embeddings where strings are converted into vectors are often used. Most models require numeric inputs rather than strings. from nltk.tokenize import wordtokenize Loop through each line of text and. Now that we finally have our text cleaned, is it ready for machine learning? Not quite. To demonstrate some natural language processing text cleaning methods. append (stem ) print ( f'Enabled Operations: ' ) # Run all operationsįor operation in enabled_operations : # Run for all linesĬleaned_text_lines = return cleaned_text_linesĬlean_list_of_text (sample_lines, enable_stopword_removal = True, enable_punctuation_removal = True, enable_lemmatization = True ) Vector Embedding append (lemmatize ) if enable_stemming :Įnabled_operations. append (remove_punctuation ) if enable_lemmatization :Įnabled_operations. about what goes on behind the curtain when we talk about cleaning or tokenizing text. The analysis of this discourse is something that needs that requires different cleaning methods, refinement, and categorization.

append (remove_stopwords ) if enable_punctuation_removal :Įnabled_operations. Python is the de-facto programming language for processing text. All the text in the corpus is compared to the list of stop words, and if any word matches with the stop words list, it is.

Applying stemming to “sweeping” removes the suffix and yields the word “sweep”.Įnable_stemming = False ) : # Get list of operationsĮnabled_operations = if enable_stopword_removal :Įnabled_operations. Stopword package in the NLTK library is used for removing stop words. A small sample of texts from Project Gutenberg appears in the NLTK corpus collection. Task Specific entails: Manual Tokenization Tokenization and Cleaning with NLTK Additional Text Cleaning Considerations Tips for Cleaning Text for Word Embedding More to follow. 3.1 Accessing Text from the Web and from Disk. There are many different flavors of stemming algorithms, for this example we use the SnowballStemmer from NLTK. NLTK-Data-Cleaning Text cleaning data source is the book Metamorphosis by Franz Kafka which is available for free from Project Gutenberg. I prefer lemmatization since it is less aggressive and the words still are valid however, stemming is also still sometimes used so I show how here. Stemming is similar to lemmatization, but rather than converting to a root word it chops off suffixes and prefixes. Tokens_lemmatized = Text Before & After Lemmatization # Lemmatize each part of speech for part_of_speech in : Lem = WordNetLemmatizer ( ) # Lemmatized text becomes input inside all loop runs Def lemmatize (input_text ) : # Instantiate class In NLP tasks, we used to apply some text cleansing before we move to the Machine Learning part.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed